Auto-da-fé has come to mean, in common lexicon, the burning of a heretic. Give it more time and the phrase will mean self-immolation. Let me push it along a bit. The recent first death in an accident involving a self-driving car should cause the auto automobile to combust spontaneously: self-driving auto-da-fé, as it were.

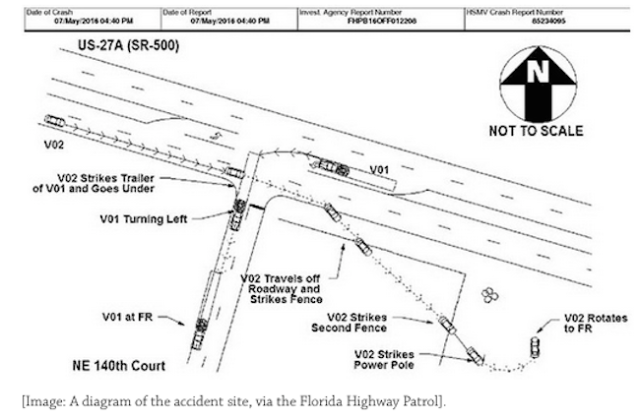

The accident, involving a Tesla, occurred a couple months ago in Williston, Fla. In a recent article for his blog BLDGBLOG, “Robot War and the Future of Perceptual Deception,” Geoff Manaugh suggests that scientific research and planning for the advent of self-driving cars must address questions raised by the accident. On May 7, a Tesla plowed broadside into an 18-wheel tractor-trailer when its autopilot computer confused the rig’s white side for the sky.

But Manaugh, far from concluding that self-driving cars are a potential transportation system that should be abandoned, averred that U.S. highway systems will have to be completely redesigned. He goes on to speculate, in light of the Tesla accident, that the military will want protect potential military targets by harnessing the flaws of computer perception to baffle enemy automated weaponry, such as self-driving tanks and drones. But isn’t everything a possible target in modern warfare? How do you protect a highway interchange from enemy drones without saddling it with features that spoof citizens’ own self-driving cars? Going even further, Manaugh suggests that the interiors of hospitals and, by extention, any facility (or home) set up to accommodate robots for a growing number of tasks will have to be completely redesigned.

Why don’t we just tear our entire society down and rebuild it to be future-friendly? That might cost a lot, but progress demands it. Or does it?

Scientific American has a more straightforward article on the implications of the Tesla crash for self-driving cars. “Deadly Tesla Crash Exposes Confusion Over Automated Driving,” by Larry Greenemeier and published yesterday, notes that a few years ago Google shifted its overall self-driving-car project from its focus on cars where the driver chooses among separate “self-driving features” (or the car chooses automatically in an emergency) to one that fully devolves all driving functions to the automobile itself:

Google had started down a similar road toward offering self-driving features about six years ago—but it abruptly switched direction in 2013 to focus on fully autonomous vehicles, for reasons similar to the circumstances surrounding the Tesla accident. “Developing a car that can shoulder the entire burden of driving is crucial to safety,” Chris Urmson, director of Google parent corporation Alphabet, Inc.’s self-driving car project, told Congress at a hearing in March. “We saw in our own testing that the human drivers can’t always be trusted to dip in and out of the task of driving when the car is encouraging them to sit back and relax.”

In short, facing more risky development problems, Google doubled down.

My contention remains that humans’ own onboard automatic driving systems (that is, their brains) are certainly flawed, but are more reliable than the computerized variety. If and when all cars drive themselves, and driving becomes a matter of millions of vehicular computers interacting with each other’s host vehicles and simultaneously with their roadway environments, this will become swiftly evident. Has anyone thought through how to switch from one system to the other without hazarding those who still rely on the former? In replacing the system we have with a system we want, we will certainly cut corners to reduce the mammoth a price tag. The whole idea is the epitome of pie in the sky. So it remains vital to not go there: We need to initiate the self-driving auto-da-fé system.